In a scene from “Star Wars: Episode IV — A New Hope,” R2D2 projects a three-dimensional hologram of Princess Leia making a desperate plea for help. That scene, filmed more than 45 years ago, involved a bit of movie magic — even today, we don’t have the technology to create such realistic and dynamic holograms.

Generating a freestanding 3D hologram would require extremely precise and fast control of light beyond the capabilities of existing technologies, which are based on liquid crystals or micromirrors.

An international group of researchers, led by a team at MIT, spent more than four years tackling this problem of high-speed optical beam forming. They have now demonstrated a programmable, wireless device that can control light, such as by focusing a beam in a specific direction or manipulating the light’s intensity, and do it orders of magnitude more quickly than commercial devices.

They also pioneered a fabrication process that ensures the device quality remains near-perfect when it is manufactured at scale. This would make their device more feasible to implement in real-world settings.

Known as a spatial light modulator, the device could be used to create super-fast lidar (light detection and ranging) sensors for self-driving cars, which could image a scene about a million times faster than existing mechanical systems. It could also accelerate brain scanners, which use light to “see” through tissue. By being able to image tissue faster, the scanners could generate higher-resolution images that aren’t affected by noise from dynamic fluctuations in living tissue, like flowing blood.

“We are focusing on controlling light, which has been a recurring research theme since antiquity. Our development is another major step toward the ultimate goal of complete optical control — in both space and time — for the myriad applications that use light,” says lead author Christopher Panuski PhD ’22, who recently graduated with his PhD in electrical engineering and computer science.

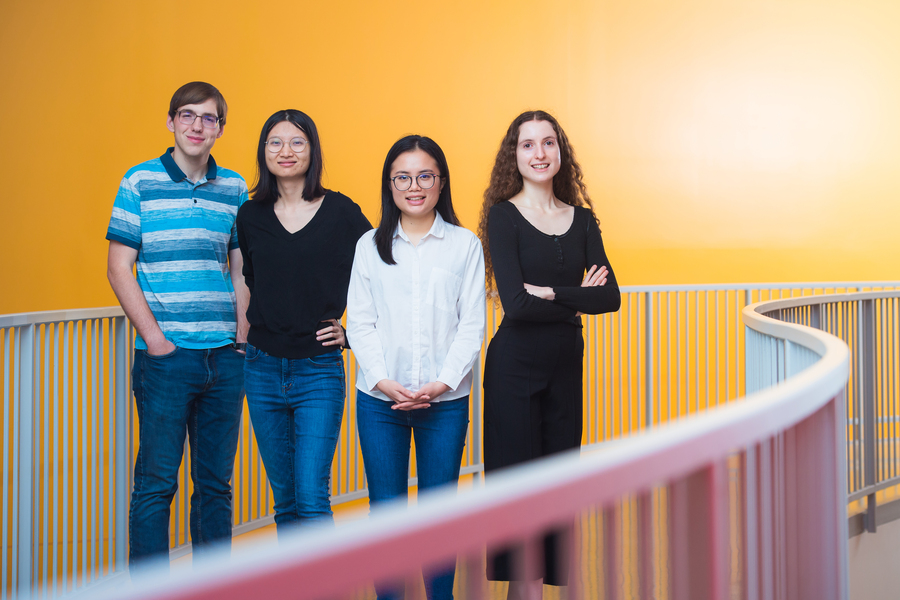

The paper is a collaboration between researchers at MIT; Flexcompute, Inc.; the University of Strathclyde; the State University of New York Polytechnic Institute; Applied Nanotools, Inc.; the Rochester Institute of Technology; and the U.S. Air Force Research Laboratory. The senior author is Dirk Englund, an associate professor of electrical engineering and computer science at MIT and a researcher in the Research Laboratory of Electronics (RLE) and Microsystems Technology Laboratories (MTL). The research is published today in Nature Photonics.

Manipulating light

A spatial light modulator (SLM) is a device that manipulates light by controlling its emission properties. Similar to an overhead projector or computer screen, an SLM transforms a passing beam of light, focusing it in one direction or refracting it to many locations for image formation.

Inside the SLM, a two-dimensional array of optical modulators controls the light. But light wavelengths are only a few hundred nanometers, so to precisely control light at high speeds the device needs an extremely dense array of nanoscale controllers. The researchers used an array of photonic crystal microcavities to achieve this goal. These photonic crystal resonators allow light to be controllably stored, manipulated, and emitted at the wavelength-scale.

When light enters a cavity, it is held for about a nanosecond, bouncing around more than 100,000 times before leaking out into space. While a nanosecond is only one billionth of a second, this is enough time for the device to precisely manipulate the light. By varying the reflectivity of a cavity, the researchers can control how light escapes. Simultaneously controlling the array modulates an entire light field, so the researchers can quickly and precisely steer a beam of light.

“One novel aspect of our device is its engineered radiation pattern. We want the reflected light from each cavity to be a focused beam because that improves the beam-steering performance of the final device. Our process essentially makes an ideal optical antenna,” Panuski says.

To achieve this goal, the researchers developed a new algorithm to design photonic crystal devices that form light into a narrow beam as it escapes each cavity, he explains.

Using light to control light

The team used a micro-LED display to control the SLM. The LED pixels line up with the photonic crystals on the silicon chip, so turning on one LED tunes a single microcavity. When a laser hits that activated microcavity, the cavity responds differently to the laser based on the light from the LED.

“This application of high-speed LED-on-CMOS displays as micro-scale optical pump sources is a perfect example of the benefits of integrated photonic technologies and open collaboration. We have been thrilled to work with the team at MIT on this ambitious project,” says Michael Strain, professor at the Institute of Photonics of the University of Strathclyde.

The use of LEDs to control the device means the array is not only programmable and reconfigurable, but also completely wireless, Panuski says.

“It is an all-optical control process. Without metal wires, we can place devices closer together without worrying about absorption losses,” he adds.

Figuring out how to fabricate such a complex device in a scalable fashion was a years-long process. The researchers wanted to use the same techniques that create integrated circuits for computers, so the device could be mass produced. But microscopic deviations occur in any fabrication process, and with micron-sized cavities on the chip, those tiny deviations could lead to huge fluctuations in performance.

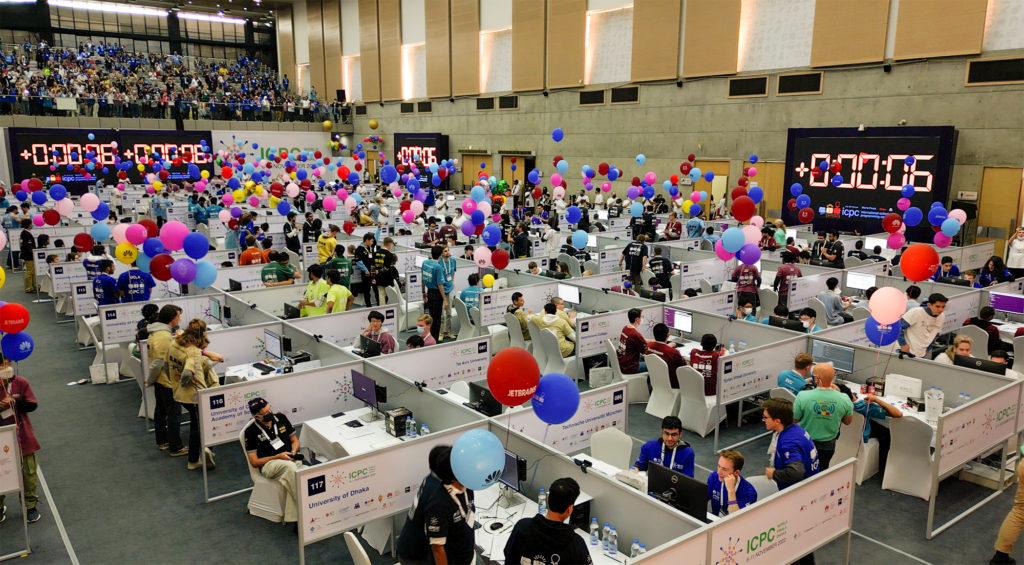

The researchers partnered with the Air Force Research Laboratory to develop a highly precise mass-manufacturing process that stamps billions of cavities onto a 12-inch silicon wafer. Then they incorporated a postprocessing step to ensure the microcavities all operate at the same wavelength.

“Getting a device architecture that would actually be manufacturable was one of the huge challenges at the outset. I think it only became possible because Chris worked closely for years with Mike Fanto and a wonderful team of engineers and scientists at AFRL, AIM Photonics, and with our other collaborators, and because Chris invented a new technique for machine vision-based holographic trimming,” says Englund.

For this “trimming” process, the researchers shine a laser onto the microcavities. The laser heats the silicon to more than 1,000 degrees Celsius, creating silicon dioxide, or glass. The researchers created a system that blasts all the cavities with the same laser at once, adding a layer of glass that perfectly aligns the resonances — that is, the natural frequencies at which the cavities vibrate.

“After modifying some properties of the fabrication process, we showed that we were able to make world-class devices in a foundry process that had very good uniformity. That is one of the big aspects of this work — figuring out how to make these manufacturable,” Panuski says.

The device demonstrated near-perfect control — in both space and time — of an optical field with a joint “spatiotemporal bandwidth” 10 times greater than that of existing SLMs. Being able to precisely control a huge bandwidth of light could enable devices that can carry massive amounts of information extremely quickly, such as high-performance communications systems.

Now that they have perfected the fabrication process, the researchers are working to make larger devices for quantum control or ultrafast sensing and imaging.

This research was funded, in part, by the Hertz Foundation, the NDSEG Fellowship Program, the Schmidt Postdoctoral Award, the Israeli Vatat Scholarship, the U.S. Army Research Office, the U.S. Air Force Research Laboratory, the UK’s Engineering and Physical Sciences Research Council, and the Royal Academy of Engineering.