EECS introduces students to major concepts in electrical engineering and computer science in an integrated and hands-on fashion. As students progress to increasingly advanced subjects, they gain considerable flexibility in shaping their own educational experiences.

The department offers a variety of different majors:

- 6-3: Computer Science and Engineering

- 6-4: Artificial Intelligence and Decision Making

- 6-5: Electrical Engineering with Computing

- 6-7: Computer Science and Molecular Biology

- 6-14: Computer Science, Economics, and Data Science

Students can also major in 6-9: Computation and Cognition (administered by the Department of Brain and Cognitive Sciences) or 11-6: Urban Science and Planning with Computer Science (administered by the Department of Urban Studies and Planning)

Curriculum Overview

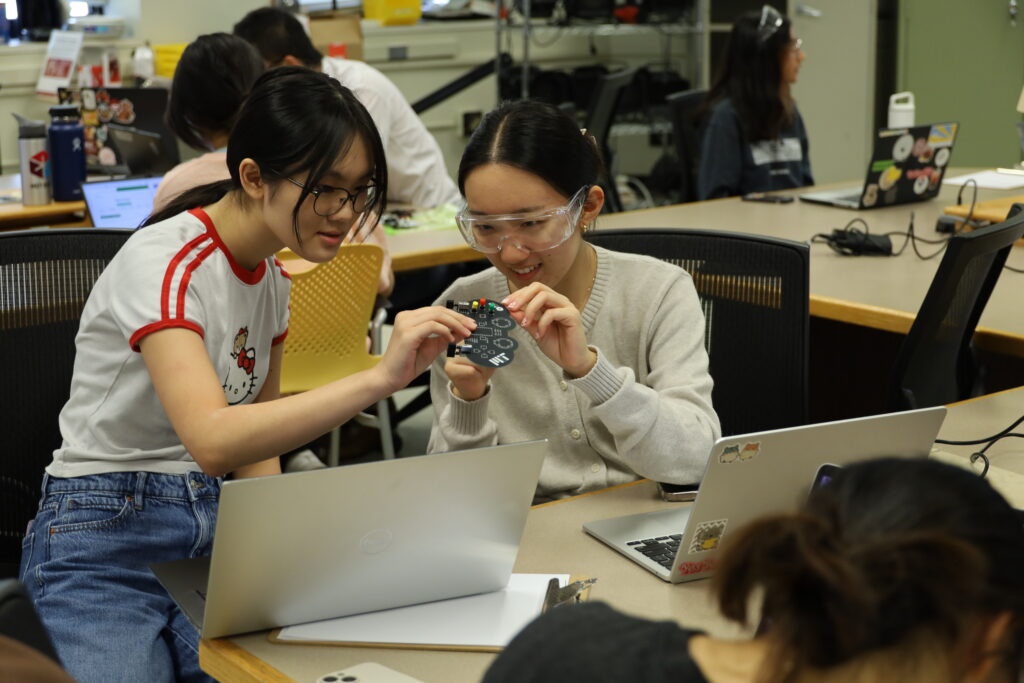

The majority of EECS majors begin with a choice of an introductory subject, exploring electrical engineering and computer science fundamentals by working on such concrete systems as robots, cell phone networks, medical devices, etc.

Students gain understanding, competence, and maturity by advancing step-by-step through subjects of greater and greater complexity:

- Foundation subjects build depth and breadth in areas ranging from circuits and electronics to applied electromagnetics and from principles of software development to signals and systems. Students must take three or four, depending on their major.

- Header subjects provide mastery within EECS’s subdisciplines: microelectronic devices and circuits; communication, control and signal processing; bioelectrical science and engineering; computer systems engineering; design and analysis of algorithms; and artificial intelligence. Students must take at least three header subjects and one lab.

- Advanced undergraduate subjects enable students to target their degree program toward in-depth mastery of areas matching their specific interests. Two advanced subjects are required.

Throughout the undergraduate years, laboratory subjects, teamwork, independent projects, and research engage students with principles and techniques of analysis, design, and experimentation in a variety of EECS areas. The department also offers numerous programs that enable students to gain practical experience, ranging from collaborative industrial projects done on campus to term-long experiences at partner companies.