By studying changes in gene expression, researchers learn how cells function at a molecular level, which could help them understand the development of certain diseases.

But a human has about 20,000 genes that can affect each other in complex ways, so even knowing which groups of genes to target is an enormously complicated problem. Also, genes work together in modules that regulate each other.

MIT researchers have now developed theoretical foundations for methods that could identify the best way to aggregate genes into related groups so they can efficiently learn the underlying cause-and-effect relationships between many genes.

Importantly, this new method accomplishes this using only observational data. This means researchers don’t need to perform costly, and sometimes infeasible, interventional experiments to obtain the data needed to infer the underlying causal relationships.

In the long run, this technique could help scientists identify potential gene targets to induce certain behavior in a more accurate and efficient manner, potentially enabling them to develop precise treatments for patients.

“In genomics, it is very important to understand the mechanism underlying cell states. But cells have a multiscale structure, so the level of summarization is very important, too. If you figure out the right way to aggregate the observed data, the information you learn about the system should be more interpretable and useful,” says graduate student Jiaqi Zhang, an Eric and Wendy Schmidt Center Fellow and co-lead author of a paper on this technique.

Zhang is joined on the paper by co-lead author Ryan Welch, currently a master’s student in engineering; and senior author Caroline Uhler, a professor in the Department of Electrical Engineering and Computer Science (EECS) and the Institute for Data, Systems, and Society (IDSS) who is also director of the Eric and Wendy Schmidt Center at the Broad Institute of MIT and Harvard, and a researcher at MIT’s Laboratory for Information and Decision Systems (LIDS). The research will be presented at the Conference on Neural Information Processing Systems.

Learning from observational data

The problem the researchers set out to tackle involves learning programs of genes. These programs describe which genes function together to regulate other genes in a biological process, such as cell development or differentiation.

Since scientists can’t efficiently study how all 20,000 genes interact, they use a technique called causal disentanglement to learn how to combine related groups of genes into a representation that allows them to efficiently explore cause-and-effect relationships.

In previous work, the researchers demonstrated how this could be done effectively in the presence of interventional data, which are data obtained by perturbing variables in the network.

But it is often expensive to conduct interventional experiments, and there are some scenarios where such experiments are either unethical or the technology is not good enough for the intervention to succeed.

With only observational data, researchers can’t compare genes before and after an intervention to learn how groups of genes function together.

“Most research in causal disentanglement assumes access to interventions, so it was unclear how much information you can disentangle with just observational data,” Zhang says.

The MIT researchers developed a more general approach that uses a machine-learning algorithm to effectively identify and aggregate groups of observed variables, e.g., genes, using only observational data.

They can use this technique to identify causal modules and reconstruct an accurate underlying representation of the cause-and-effect mechanism. “While this research was motivated by the problem of elucidating cellular programs, we first had to develop novel causal theory to understand what could and could not be learned from observational data. With this theory in hand, in future work we can apply our understanding to genetic data and identify gene modules as well as their regulatory relationships,” Uhler says.

A layerwise representation

Using statistical techniques, the researchers can compute a mathematical function known as the variance for the Jacobian of each variable’s score. Causal variables that don’t affect any subsequent variables should have a variance of zero.

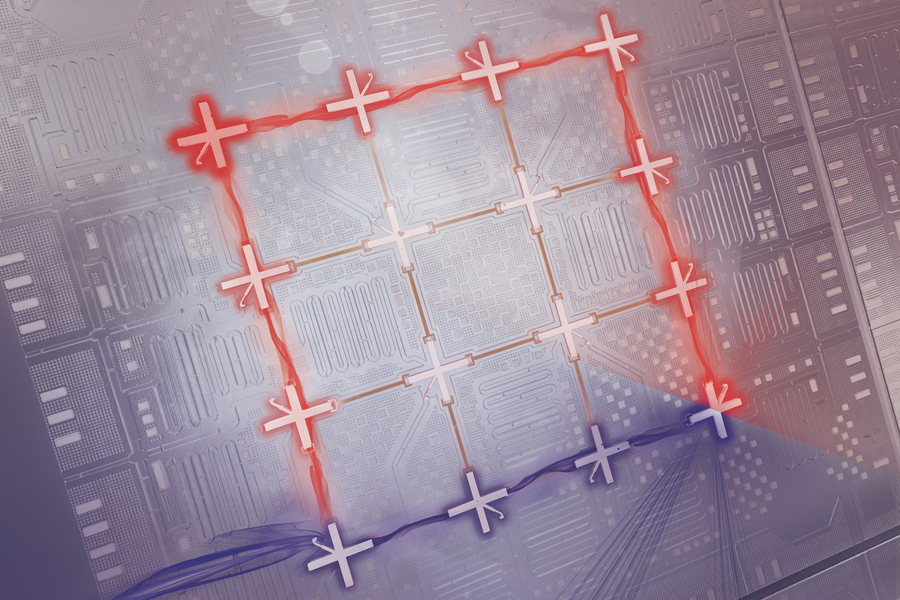

The researchers reconstruct the representation in a layer-by-layer structure, starting by removing the variables in the bottom layer that have a variance of zero. Then they work backward, layer-by-layer, removing the variables with zero variance to determine which variables, or groups of genes, are connected.

“Identifying the variances that are zero quickly becomes a combinatorial objective that is pretty hard to solve, so deriving an efficient algorithm that could solve it was a major challenge,” Zhang says.

In the end, their method outputs an abstracted representation of the observed data with layers of interconnected variables that accurately summarizes the underlying cause-and-effect structure.

Each variable represents an aggregated group of genes that function together, and the relationship between two variables represents how one group of genes regulates another. Their method effectively captures all the information used in determining each layer of variables.

After proving that their technique was theoretically sound, the researchers conducted simulations to show that the algorithm can efficiently disentangle meaningful causal representations using only observational data.

In the future, the researchers want to apply this technique in real-world genetics applications. They also want to explore how their method could provide additional insights in situations where some interventional data are available, or help scientists understand how to design effective genetic interventions. In the future, this method could help researchers more efficiently determine which genes function together in the same program, which could help identify drugs that could target those genes to treat certain diseases.

This research is funded, in part, by the U.S. Office of Naval Research, the National Institutes of Health, the U.S. Department of Energy, a Simons Investigator Award, the Eric and Wendy Schmidt Center at the Broad Institute, the Advanced Undergraduate Research Opportunities Program at MIT, and an Apple AI/ML PhD Fellowship.