MIT researchers have developed a battery-free, self-powered sensor that can harvest energy from its environment.

Because it requires no battery that must be recharged or replaced, and because it requires no special wiring, such a sensor could be embedded in a hard-to-reach place, like inside the inner workings of a ship’s engine. There, it could automatically gather data on the machine’s power consumption and operations for long periods of time.

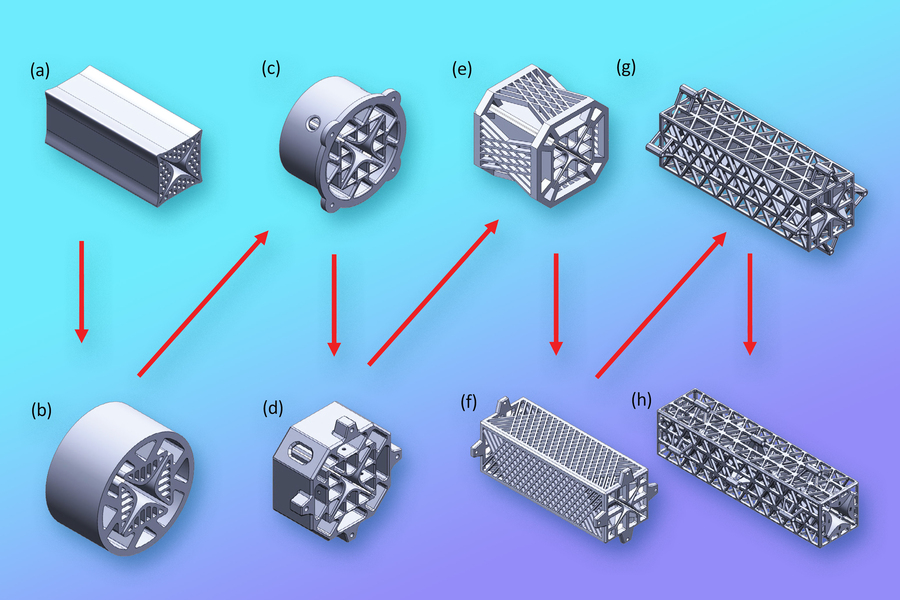

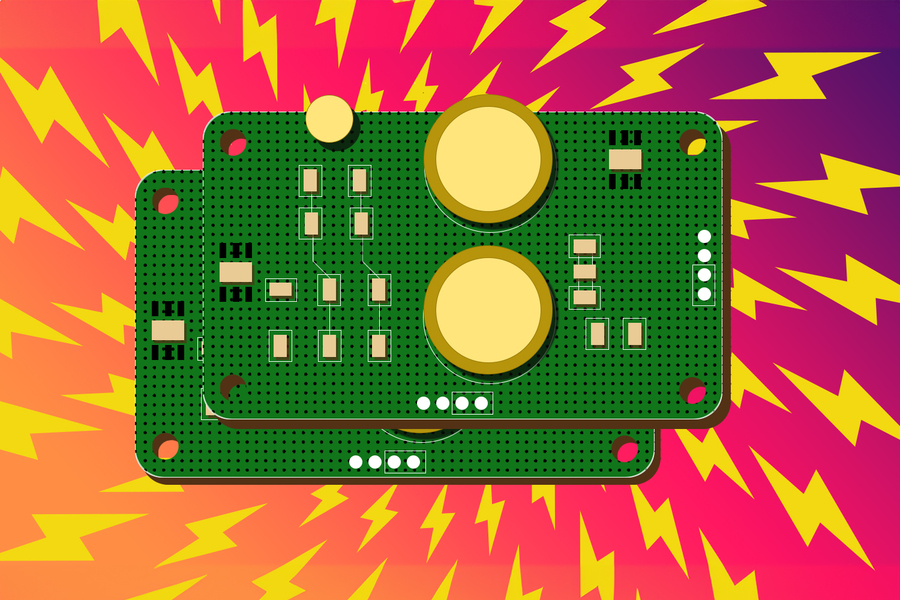

The researchers built a temperature-sensing device that harvests energy from the magnetic field generated in the open air around a wire. One could simply clip the sensor around a wire that carries electricity — perhaps the wire that powers a motor — and it will automatically harvest and store energy which it uses to monitor the motor’s temperature.

“This is ambient power — energy that I don’t have to make a specific, soldered connection to get. And that makes this sensor very easy to install,” says Steve Leeb, the Emanuel E. Landsman Professor of Electrical Engineering and Computer Science (EECS) and professor of mechanical engineering, a member of the Research Laboratory of Electronics, and senior author of a paper on the energy-harvesting sensor.

In the paper, which appeared as the featured article in the January issue of the IEEE Sensors Journal, the researchers offer a design guide for an energy-harvesting sensor that lets an engineer balance the available energy in the environment with their sensing needs.

The paper lays out a roadmap for the key components of a device that can sense and control the flow of energy continually during operation.

The versatile design framework is not limited to sensors that harvest magnetic field energy, and can be applied to those that use other power sources, like vibrations or sunlight. It could be used to build networks of sensors for factories, warehouses, and commercial spaces that cost less to install and maintain.

“We have provided an example of a battery-less sensor that does something useful, and shown that it is a practically realizable solution. Now others will hopefully use our framework to get the ball rolling to design their own sensors,” says lead author Daniel Monagle, an EECS graduate student.

Monagle and Leeb are joined on the paper by EECS graduate student Eric Ponce.

John Donnal, an associate professor of weapons and controls engineering at the U.S. Naval Academy who was not involved with this work, studies techniques to monitor ship systems. Getting access to power on a ship can be difficult, he says, since there are very few outlets and strict restrictions as to what equipment can be plugged in.

“Persistently measuring the vibration of a pump, for example, could give the crew real-time information on the health of the bearings and mounts, but powering a retrofit sensor often requires so much additional infrastructure that the investment is not worthwhile,” Donnal adds. “Energy-harvesting systems like this could make it possible to retrofit a wide variety of diagnostic sensors on ships and significantly reduce the overall cost of maintenance.”

A how-to guide

The researchers had to meet three key challenges to develop an effective, battery-free, energy-harvesting sensor.

First, the system must be able to cold start, meaning it can fire up its electronics with no initial voltage. They accomplished this with a network of integrated circuits and transistors that allow the system to store energy until it reaches a certain threshold. The system will only turn on once it has stored enough power to fully operate.

Second, the system must store and convert the energy it harvests efficiently, and without a battery. While the researchers could have included a battery, that would add extra complexities to the system and could pose a fire risk.

“You might not even have the luxury of sending out a technician to replace a battery. Instead, our system is maintenance-free. It harvests energy and operates itself,” Monagle adds.

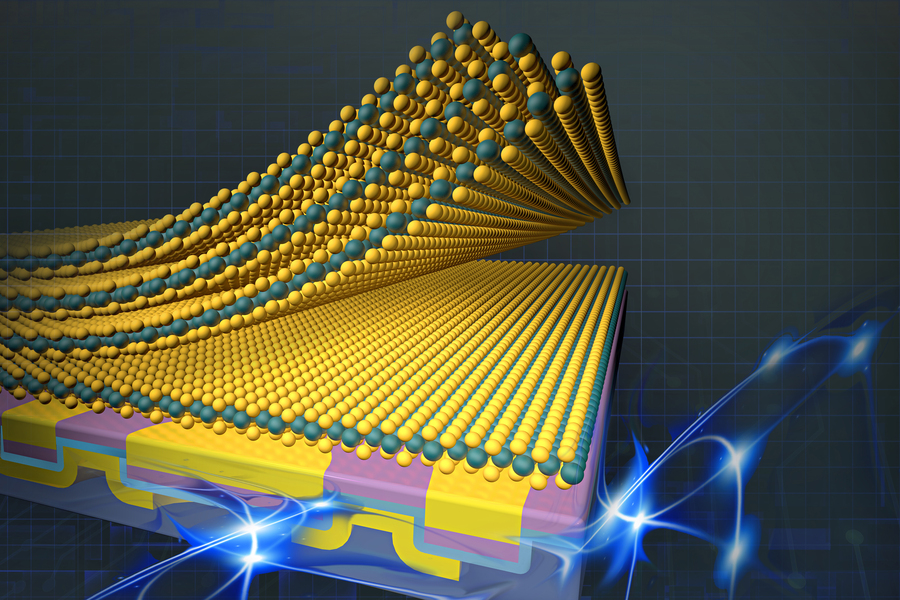

To avoid using a battery, they incorporate internal energy storage that can include a series of capacitors. Simpler than a battery, a capacitor stores energy in the electrical field between conductive plates. Capacitors can be made from a variety of materials, and their capabilities can be tuned to a range of operating conditions, safety requirements, and available space.

The team carefully designed the capacitors so they are big enough to store the energy the device needs to turn on and start harvesting power, but small enough that the charge-up phase doesn’t take too long.

In addition, since a sensor might go weeks or even months before turning on to take a measurement, they ensured the capacitors can hold enough energy even if some leaks out over time.

Finally, they developed a series of control algorithms that dynamically measure and budget the energy collected, stored, and used by the device. A microcontroller, the “brain” of the energy management interface, constantly checks how much energy is stored and infers whether to turn the sensor on or off, take a measurement, or kick the harvester into a higher gear so it can gather more energy for more complex sensing needs.

“Just like when you change gears on a bike, the energy management interface looks at how the harvester is doing, essentially seeing whether it is pedaling too hard or too soft, and then it varies the electronic load so it can maximize the amount of power it is harvesting and match the harvest to the needs of the sensor,” Monagle explains.

Self-powered sensor

Using this design framework, they built an energy management circuit for an off-the-shelf temperature sensor. The device harvests magnetic field energy and uses it to continually sample temperature data, which it sends to a smartphone interface using Bluetooth.

The researchers used super-low-power circuits to design the device, but quickly found that these circuits have tight restrictions on how much voltage they can withstand before breaking down. Harvesting too much power could cause the device to explode.

To avoid that, their energy harvester operating system in the microcontroller automatically adjusts or reduces the harvest if the amount of stored energy becomes excessive.

They also found that communication — transmitting data gathered by the temperature sensor — was by far the most power-hungry operation.

“Ensuring the sensor has enough stored energy to transmit data is a constant challenge that involves careful design,” Monagle says.

In the future, the researchers plan to explore less energy-intensive means of transmitting data, such as using optics or acoustics. They also want to more rigorously model and predict how much energy might be coming into a system, or how much energy a sensor might need to take measurements, so a device could effectively gather even more data.

“If you only make the measurements you think you need, you may miss something really valuable. With more information, you might be able to learn something you didn’t expect about a device’s operations. Our framework lets you balance those considerations,” Leeb says.

“This paper is well-documented regarding what a practical self-powered sensor node should internally entail for realistic scenarios. The overall design guidelines, particularly on the cold-start issue, are very helpful,” says Jinyeong Moon, an assistant professor of electrical and computer engineering at Florida A&M University-Florida State University College of Engineering who was not involved with this work. “Engineers planning to design a self-powering module for a wireless sensor node will greatly benefit from these guidelines, easily ticking off traditionally cumbersome cold-start-related checklists.”

The work is supported, in part, by the Office of Naval Research and The Grainger Foundation.