New method could increase LLM training efficiency

By leveraging idle computing time, researchers can double the speed of model training while preserving accuracy.

Antonio Torralba, three MIT alumni, named ACM Fellows

ACM Fellows, the highest honor bestowed by the professional organization, are registered members of the society selected by their peers for outstanding accomplishments in computing and information technology and/or outstanding service to ACM and the larger computing community.

Charting the future of AI, from safer answers to faster thinking

MIT PhD students who interned with the MIT-IBM Watson AI Lab Summer Program are pushing AI tools to be more flexible, efficient, and grounded in truth.

MIT-IBM Watson AI Lab researchers have developed a universal guide for estimating how large language models will perform based on smaller models in the same family.

Anantha Chandrakasan named MIT provost

A faculty member since 1994, Chandrakasan has also served as dean of engineering and MIT’s inaugural chief innovation and strategy officer, among other roles.

Training LLMs to self-detoxify their language

A new method from the MIT-IBM Watson AI Lab helps large language models to steer their own responses toward safer, more ethical, value-aligned outputs.

Could LLMs help design our next medicines and materials?

A new method lets users ask, in plain language, for a new molecule with certain properties, and receive a detailed description of how to synthesize it.

Researchers fuse the best of two popular methods to create an image generator that uses less energy and can run locally on a laptop or smartphone.

A new way to create realistic 3D shapes using generative AI

Researchers propose a simple fix to an existing technique that could help artists, designers, and engineers create better 3D models.

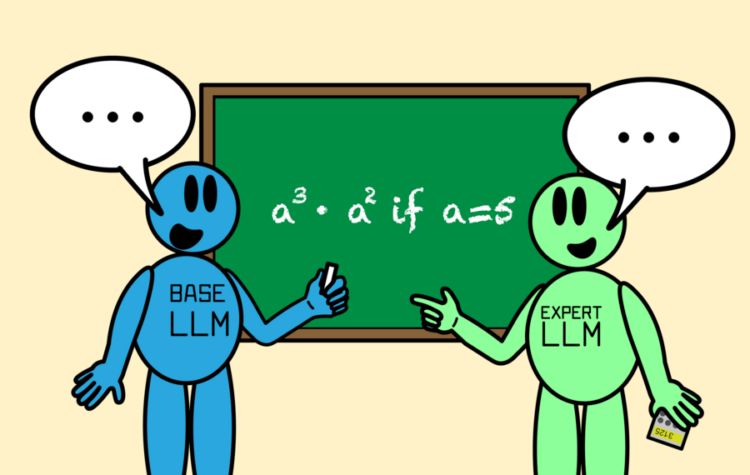

Enhancing LLM collaboration for smarter, more efficient solutions

“Co-LLM” algorithm helps a general-purpose AI model collaborate with an expert large language model by combining the best parts of both answers, leading to more factual responses.