Researchers create the first artificial vision system for both land and water

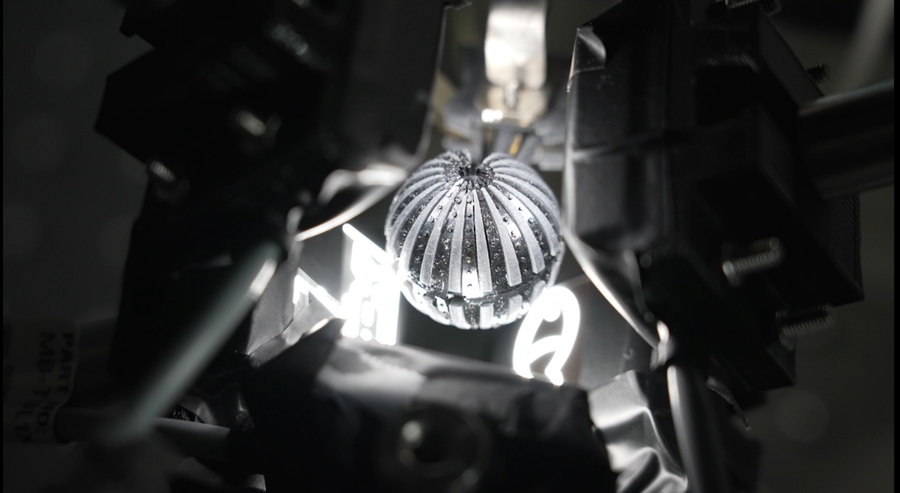

Researchers created the first artificial vision system that can see both on land and underwater. Here, the "eye" is shown with a measurement setup for panoramic imaging. Photo courtesy of the Gwangju Institute of Science and Technology.

Researchers created the first artificial vision system that can see both on land and underwater. Here, the "eye" is shown with a measurement setup for panoramic imaging. Photo courtesy of the Gwangju Institute of Science and Technology. Giving our hardware sight has empowered a host of applications in self-driving cars, object detection, and crop monitoring. But unlike animals, synthetic vision systems can’t simply evolve under natural habitats. Dynamic visual systems that can navigate both land and water, therefore, have yet to power our machines — leading researchers from MIT, the Gwangju Institute of Science and Technology (GIST), and Seoul National University in Korea to develop a novel artificial vision system that closely replicates the vision of the fiddler crab and is able to tackle both terrains.

The semi-terrestrial species — known affectionately as the calling crab, as it appears to be beckoning with its huge claws — has amphibious imaging ability and an extremely wide field of view, as all current systems are limited to hemispherical. The new artificial eye, resembling a spherical, largely nondescript, small, black ball, makes meaning of its inputs through a mixture of materials that process and understand light. The scientists combined an array of flat microlenses with a graded refractive index profile, and a flexible photodiode array with comb-shaped patterns, all wrapped on the 3D spherical structure. This configuration meant that light rays from multiple sources would always converge at the same spot on the image sensor, regardless of the refractive index of its surroundings.

A paper on this system, co-authored by Frédo Durand, an MIT professor of electrical engineering and computer science and affiliate of the Computer Science and Artificial Ingelligence Laboratory (CSAIL), and 15 others, appears in the July issue of the journal Nature Electronics.

Both the amphibious and panoramic imaging capabilities were tested in in-air and in-water experiments by imaging five objects with different distances and directions, and the system provided consistent image quality and an almost 360-degree field of view in both terrestrial and aquatic environments. Meaning: It could see both underwater and on land, where previous systems have been limited to a single domain.

There’s more than meets the eye when it comes to fiddler crabs. Behind their massive claws exists a powerful, unique vision system that evolved from living both underwater and on land. The creatures’ flat corneas, combined with a graded refractive index, counter defocusing effects arising from changes in the external environment — an overwhelming limit for other compound eyes. The crabs also have a 3D omnidirectional field of view, from an ellipsoidal and stalk-eye structure. They’ve evolved to look at almost everything at once to avoid attacks on wide-open tidal flats, and to communicate and interact with mates.

To be sure, biomimetic cameras aren’t new. In 2013, a wide field of view (FoV) camera that mimicked the compound eyes of an insect was reported in Nature, and in 2020, a wide FoV camera mimicking a fish eye emerged. While these cameras can capture large areas at once, it’s structurally difficult to exceed 180 degrees, and more recently, commercial products with 360-degree FoV have come into play. These can be clunky, though, since they have to merge images taken from two or more cameras, and to enlarge the field of view, you need an optical system with a complex configuration, which causes image distortion. It’s also challenging to sustain focusing capability when the surrounding environment changes, such as in air and underwater — hence the impetus to look to the calling crab.

The crab proved a worthy muse. During tests, five cutesy objects (dolphin, airplane, submarine, fish, and ship), at different distances were projected onto the artificial vision system from different angles. The team performed multi-laser spot imagining experiments, and the artificial images matched the simulation. To go deep, they immersed the device halfway in water in a container.

A logical extension of the work includes looking at biologically inspired light-adaptation schemes in the quest for higher resolution and superior image-processing techniques.

“This is a spectacular piece of optical engineering and non-planar imaging, combining aspects of bio-inspired design and advanced flexible electronics to achieve unique capabilities unavailable in conventional cameras,” says John A. Rogers, the Louis Simpson and Kimberly Querrey Professor of Materials Science and Engineering, Biomedical Engineering, and Neurological Surgery at Northwestern University, who was not involved in the work. “Potential uses span from population surveillance to environmental monitoring.”

This research was supported by the Institute for Basic Science, the National Research Foundation of Korea, and the GIST-MIT Research Collaboration grant funded by the GIST in 2022.

Media Inquiries

Journalists seeking information about EECS, or interviews with EECS faculty members, should email eecs-communications@mit.edu.

Please note: The EECS Communications Office only handles media inquiries related to MIT’s Department of Electrical Engineering & Computer Science. Please visit other school, department, laboratory, or center websites to locate their dedicated media-relations teams.