When you think about hands-free devices, you might picture Alexa and other voice-activated in-home assistants, Bluetooth earpieces, or asking Siri to make a phone call in your car. You might not imagine using your mouth to communicate with other devices like a computer or a phone remotely.

Thinking outside the box, MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) and Aarhus University researchers have now engineered “MouthIO,” a dental brace that can be fabricated with sensors and feedback components to capture in-mouth interactions and data. This interactive wearable could eventually assist dentists and other doctors with collecting health data and help motor-impaired individuals interact with a phone, computer, or fitness tracker using their mouths.

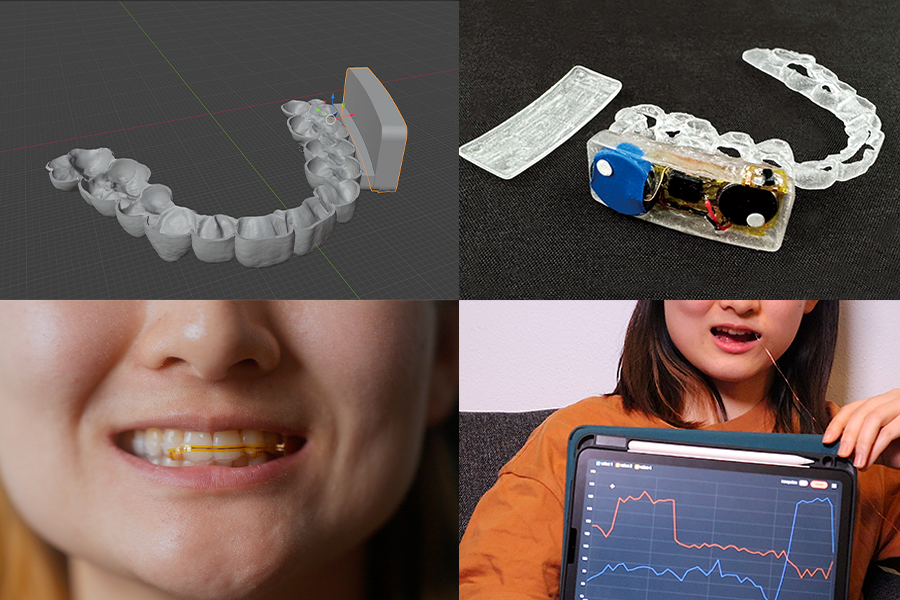

Resembling an electronic retainer, MouthIO is a see-through brace that fits the specifications of your upper or lower set of teeth from a scan. The researchers created a plugin for the modeling software Blender to help users tailor the device to fit a dental scan, where you can then 3D print your design in dental resin. This computer-aided design tool allows users to digitally customize a panel (called PCB housing) on the side to integrate electronic components like batteries, sensors (including detectors for temperature and acceleration, as well as tongue-touch sensors), and actuators (like vibration motors and LEDs for feedback). You can also place small electronics outside of the PCB housing on individual teeth.

Research by others at MIT has also led to another mouth-based touchpad, based on technology initially developed in the Media Lab. That device is available via Augmental, a startup deploying technology that lets people with movement impairments seamlessly interact with their personal computational devices.

The active mouth

“The mouth is a really interesting place for an interactive wearable,” says senior author Michael Wessely, a former CSAIL postdoc and senior author on a paper about MouthIO who is now an assistant professor at Aarhus University. “This compact, humid environment has elaborate geometries, making it hard to build a wearable interface to place inside. With MouthIO, though, we’ve developed an open-source device that’s comfortable, safe, and almost invisible to others. Dentists and other doctors are eager about MouthIO for its potential to provide new health insights, tracking things like teeth grinding and potentially bacteria in your saliva.”

The excitement for MouthIO’s potential in health monitoring stems from initial experiments. The team found that their device could track bruxism (the habit of grinding teeth) by embedding an accelerometer within the brace to track jaw movements. When attached to the lower set of teeth, MouthIO detected when users grind and bite, with the data charted to show how often users did each.

Wessely and his colleagues’ customizable brace could one day help users with motor impairments, too. The team connected small touchpads to MouthIO, helping detect when a user’s tongue taps their teeth. These interactions could be sent via Bluetooth to scroll across a webpage, for example, allowing the tongue to act as a “third hand” to help enable hands-free interaction.

“MouthIO is a great example how miniature electronics now allow us to integrate sensing into a broad range of everyday interactions,” says study co-author Stefanie Mueller, the TIBCO Career Development Associate Professor in the MIT departments of Electrical Engineering and Computer Science and Mechanical Engineering and leader of the HCI Engineering Group at CSAIL. “I’m especially excited about the potential to help improve accessibility and track potential health issues among users.”

Molding and making MouthIO

To get a 3D model of your teeth, you can first create a physical impression and fill it with plaster. You can then scan your mold with a mobile app like Polycam and upload that to Blender. Using the researchers’ plugin within this program, you can clean up your dental scan to outline a precise brace design. Finally, you 3D print your digital creation in clear dental resin, where the electronic components can then be soldered on. Users can create a standard brace that covers their teeth, or opt for an “open-bite” design within their Blender plugin. The latter fits more like open-finger gloves, exposing the tips of your teeth, which helps users avoid lisping and talk naturally.

This “do it yourself” method costs roughly $15 to produce and takes two hours to be 3D-printed. MouthIO can also be fabricated with a more expensive, professional-level teeth scanner similar to what dentists and orthodontists use, which is faster and less labor-intensive.

Compared to its closed counterpart, which fully covers your teeth, the researchers view the open-bite design as a more comfortable option. The team preferred to use it for beverage monitoring experiments, where they fabricated a brace capable of alerting users when a drink was too hot. This iteration of MouthIO had a temperature sensor and a monitor embedded within the PCB housing that vibrated when a drink exceeded 65 degrees Celsius (or 149 degrees Fahrenheit). This could help individuals with mouth numbness better understand what they’re consuming.

In a user study, participants also preferred the open-bite version of MouthIO. “We found that our device could be suitable for everyday use in the future,” says study lead author and Aarhus University PhD student Yijing Jiang. “Since the tongue can touch the front teeth in our open-bite design, users don’t have a lisp. This made users feel more comfortable wearing the device during extended periods with breaks, similar to how people use retainers.”

The team’s initial findings indicate that MouthIO is a cost-effective, accessible, and customizable interface, and the team is working on a more long-term study to evaluate its viability further. They’re looking to improve its design, including experimenting with more flexible materials, and placing it in other parts of the mouth, like the cheek and the palate. Among these ideas, the researchers have already prototyped two new designs for MouthIO: a single-sided brace for even higher comfort when wearing MouthIO while also being fully invisible to others, and another fully capable of wireless charging and communication.

Jiang, Mueller, and Wessely’s co-authors include PhD student Julia Kleinau, master’s student Till Max Eckroth, and associate professor Eve Hoggan, all of Aarhus University. Their work was supported by a Novo Nordisk Foundation grant and was presented at ACM’s Symposium on User Interface Software and Technology.