MIT Sea Grant works with the Woodwell Climate Research Center and other collaborators to demonstrate a deep learning-based system for fish monitoring.

Vincent Sitzmann named Junior Bose Award winner

The award is given annually to an outstanding contributor to education from among the faculty members who are being proposed for promotion to associate professor without tenure.

MIT PhD student and CSAIL researcher Justin Kay describes his work combining AI and computer vision systems to monitor the ecosystems that support our planet.

Agrawal received the award for his work in “robot learning, self-supervised and sim-to-real policy learning, agile locomotion, and dexterous manipulation,” according to the organization.

Using generative AI to diversify virtual training grounds for robots

New tool from MIT CSAIL creates realistic virtual kitchens and living rooms where simulated robots can interact with models of real-world objects, scaling up training data for robot foundation models.

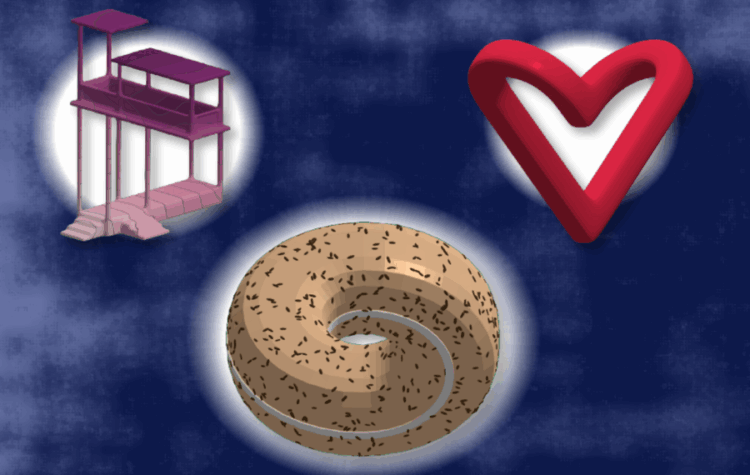

MIT tool visualizes and edits “physically impossible” objects

By visualizing Escher-like optical illusions in 2.5 dimensions, the “Meschers” tool could help scientists understand physics-defying shapes and spark new designs.

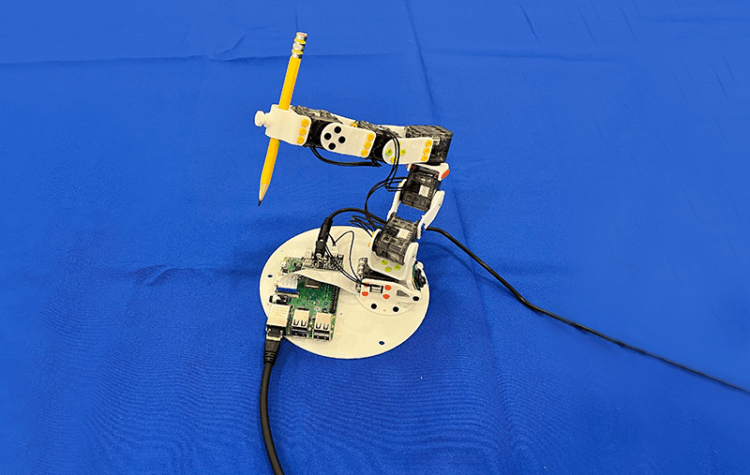

Robot, know thyself: New vision-based system teaches machines to understand their bodies

Neural Jacobian Fields, developed by MIT CSAIL researchers, can learn to control any robot from a single camera, without any other sensors.

The approach could help animators to create realistic 3D characters or engineers to design elastic products.

SketchAgent, a drawing system developed by MIT CSAIL researchers, sketches up concepts stroke-by-stroke, teaching language models to visually express concepts on their own and collaborate with humans.

Words like “no” and “not” can cause this popular class of AI models to fail unexpectedly in high-stakes settings, such as medical diagnosis.