A new debiasing technique called WRING avoids creating or amplifying biases that can occur with existing debiasing approaches.

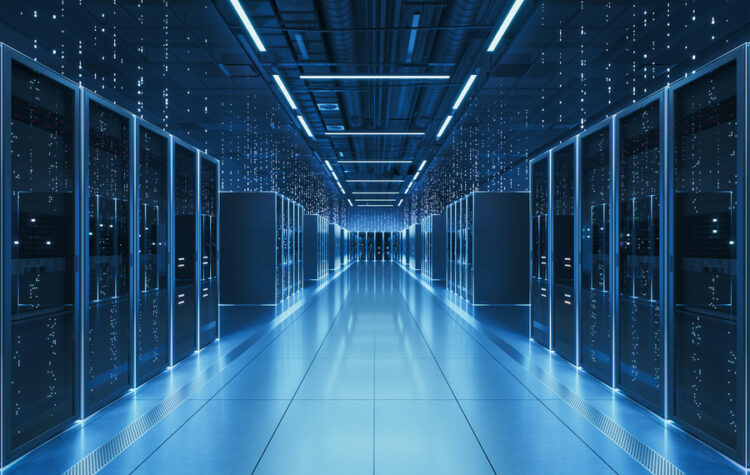

A faster way to estimate AI power consumption

The “EnergAIzer” method generates reliable results in seconds, enabling data center operators to efficiently allocate resources and reduce wasted energy.

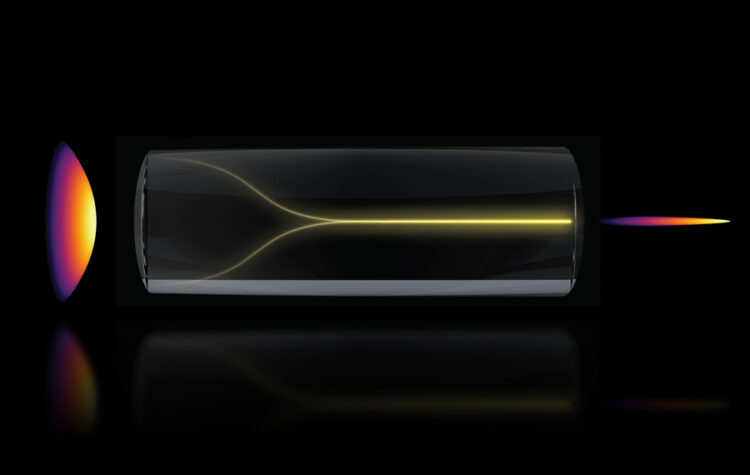

Self-organizing “pencil beam” laser could help scientists design brain-targeted therapies

MIT researchers leveraged a surprise discovery to devise a faster and more precise biomedical imaging technique.

Teaching AI models to say “I’m not sure”

A new training method improves the reliability of AI confidence estimates without sacrificing performance, addressing a root cause of hallucination in reasoning models.

Ultra-efficient chip design enables extremely strong cryptography algorithms to run on energy-constrained edge devices.

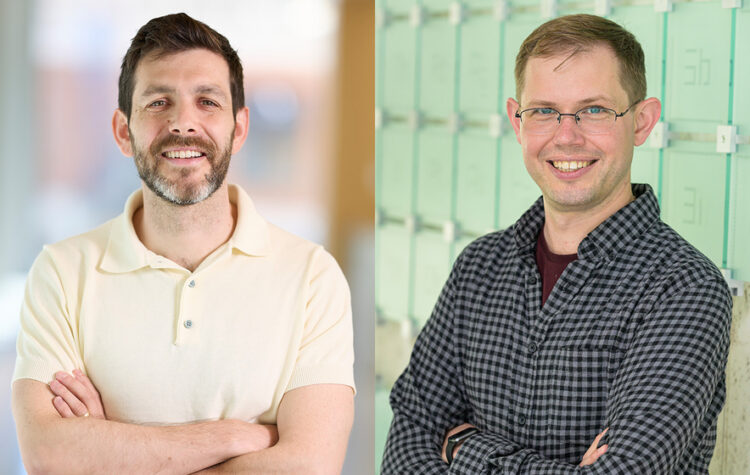

Jacob Andreas and Brett McGuire named Edgerton Award winners

The associate professors of EECS and chemistry, respectively, are honored for exceptional contributions to teaching, research, and service at MIT.

Vinod Vaikuntanathan earns 2026 Guggenheim Fellowship

The award is given out yearly to leading thinkers, innovators, and creators across art, science, and scholarship to tackle current issues.

Researchers developed a system that intelligently balances workloads to improve the efficiency of flash storage hardware in a data center.

The first leader of the Computation Structures Group, he pioneered the development of dataflow models of computation.

Researchers use control theory to shed unnecessary complexity from AI models during training, cutting compute costs without sacrificing performance.